GlobeNewswire11.06.18

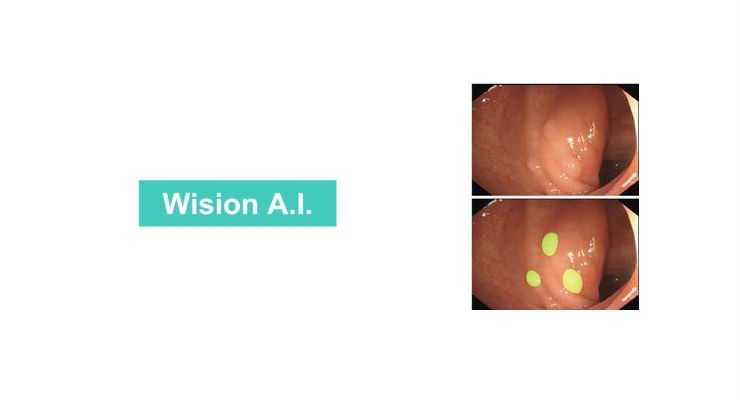

Shanghai Wision AI Co., Ltd, a developer of computer-aided diagnostic algorithms and systems to improve the accuracy and effectiveness of diagnostic imaging, announced results of a study validating a novel machine-learning algorithm that improves detection of adenomatous polyps during colonoscopy. Researchers at Wision AI conducted the study in collaboration with clinicians at the Center for Advanced Endoscopy at Beth Israel Deaconess Medical Center (BIDMC), Harvard Medical School and the Sichuan Academy of Medical Sciences & Sichuan Provincial People’s Hospital, and the results appear in the current issue of Nature Biomedical Engineering. Built on the same network architecture used to develop self-driving cars, the Wision AI algorithm is designed to enable “self-driving” in colonoscopy procedures.

“Previous studies have shown that every one percent increase in the rate of detecting precancerous polyps results in a three percent decrease in the risk of interval colon cancer,” said Tyler Berzin, M.D., Co-Director, GI Endoscopy, and Director, Advanced Endoscopy Fellowship at BIDMC and Assistant Professor of Medicine at Harvard Medical School. “This underscores the importance of accurate polyp detection. The encouraging results obtained using Wision AI demonstrate that a novel deep-learning algorithm can automatically detect polyps during colonoscopy, opening new doors to increasing the effectiveness of screening colonoscopy and enabling a new quality control metric that may improve endoscopy skills.”

“Every one percent increase in the rate of detecting precancerous polyps results in a three percent decrease in the risk of interval colon cancer,” said Tyler Berzin, M.D., Co-Director, GI Endoscopy.

Detecting and removing precancerous polyps during colonoscopy is the gold standard in preventing colon cancer, a leading cause of cancer death. However, the adenoma miss rate among the more than 14 million colonoscopies performed in the United States each year is 6 – 27 percent. The inability to recognize polyps within the visual field is a key reason that precancerous polyps go undetected. Studies show that having a second set of eyes on the monitor during colonoscopy procedures can increase detection rates by up to 30 percent. The Wision AI algorithm can serve as this second view by highlighting polyps directly on the monitor.

A key challenge in developing AI-based algorithms for use in clinical settings is that the dataset used to validate the algorithm is typically very small compared with the development dataset. This can result in “over-fitting” of the algorithm in a manner that limits its efficacy in real-world clinical scenarios. Additionally, in most cases, a single dataset is collected and divided for both training and validation, which may result in similar data being used for both steps and therefore reducing the rigor of the validation process. In contrast, the Wision AI algorithm was validated on large, prospectively developed datasets that were collected independently from the training dataset and were several-fold larger than the training dataset. This more rigorous validation approach that Wision AI utilizes is designed to increase the performance of the algorithm in real-world clinical settings.

The algorithm was developed using 5,545 images (65.5 percent containing polyps and 34.5 percent without polyps) from the colonoscopy reports of 1,290 patients. Experienced endoscopists annotated the presence of polyps in all images used in the development dataset, and the algorithm was then validated on four independent datasets: two sets for image analysis (A and B) and two sets for video analysis (C and D).

Key findings from the study include:

“Wision AI is committed to realizing the clinical value of AI and mathematical medicine in a variety of indications, including gastroenterology, ophthalmology, neurology, and radiation-based imaging,” said JingJia Liu, CEO at Wision AI. “The results of this study demonstrate the power of our rigorous approach to developing deep-learning algorithms, which utilizes distinct datasets for training and validation and results in high levels of specificity and sensitivity that have the potential to improve diagnostic screening methods that are known to reduce disease risk, improve health outcomes and save lives.”

The first clinical trial of this technology had been completed early this year, and results from this study demonstrated a significantly improved adenoma detection rate (ADR) in the AI-aided group. Full results from this first clinical trial were presented at the United European Gastroenterology Week 2018 in Vienna last week by the authors of the Nature Biomedical Engineering publication, and Pu Wang, MD, a gastroenterologist at Sichuan Provincial Hospital, received the National Scholar Award for this cutting-edge research.

“Previous studies have shown that every one percent increase in the rate of detecting precancerous polyps results in a three percent decrease in the risk of interval colon cancer,” said Tyler Berzin, M.D., Co-Director, GI Endoscopy, and Director, Advanced Endoscopy Fellowship at BIDMC and Assistant Professor of Medicine at Harvard Medical School. “This underscores the importance of accurate polyp detection. The encouraging results obtained using Wision AI demonstrate that a novel deep-learning algorithm can automatically detect polyps during colonoscopy, opening new doors to increasing the effectiveness of screening colonoscopy and enabling a new quality control metric that may improve endoscopy skills.”

“Every one percent increase in the rate of detecting precancerous polyps results in a three percent decrease in the risk of interval colon cancer,” said Tyler Berzin, M.D., Co-Director, GI Endoscopy.

Detecting and removing precancerous polyps during colonoscopy is the gold standard in preventing colon cancer, a leading cause of cancer death. However, the adenoma miss rate among the more than 14 million colonoscopies performed in the United States each year is 6 – 27 percent. The inability to recognize polyps within the visual field is a key reason that precancerous polyps go undetected. Studies show that having a second set of eyes on the monitor during colonoscopy procedures can increase detection rates by up to 30 percent. The Wision AI algorithm can serve as this second view by highlighting polyps directly on the monitor.

A key challenge in developing AI-based algorithms for use in clinical settings is that the dataset used to validate the algorithm is typically very small compared with the development dataset. This can result in “over-fitting” of the algorithm in a manner that limits its efficacy in real-world clinical scenarios. Additionally, in most cases, a single dataset is collected and divided for both training and validation, which may result in similar data being used for both steps and therefore reducing the rigor of the validation process. In contrast, the Wision AI algorithm was validated on large, prospectively developed datasets that were collected independently from the training dataset and were several-fold larger than the training dataset. This more rigorous validation approach that Wision AI utilizes is designed to increase the performance of the algorithm in real-world clinical settings.

The algorithm was developed using 5,545 images (65.5 percent containing polyps and 34.5 percent without polyps) from the colonoscopy reports of 1,290 patients. Experienced endoscopists annotated the presence of polyps in all images used in the development dataset, and the algorithm was then validated on four independent datasets: two sets for image analysis (A and B) and two sets for video analysis (C and D).

Key findings from the study include:

- Validation on dataset A, which included 27,113 images from patients undergoing colonoscopy at the Endoscopy Center of Sichuan Provincial People’s Hospital, found a per-image-sensitivity of 94.4 percent and a per-image-specificity of 95.9 percent. (The per-image-sensitivity in a subset of 1,280 images with polyps that are typically hard to detect was 91.7 percent.)

- Validation on dataset B, based on a public database of 612 colonoscopy images acquired from the Hospital Clinic of Barcelona, found a per-image-sensitivity of 88.2 percent. The use of this dataset allowed for generalization of the validation data to a broader patient population.

- Validation on dataset C included a series of colonoscopy videos containing 138 polyps, found a per-image sensitivity of 91.6 percent among 60,914 frames of video, and a per-polyp sensitivity of 100 percent.

- Validation on dataset D, which contained 54 colonoscopy videos without any polyps, found a per-image-specificity of 95.4 percent among 1,072,483 frames.

- The total processing time for each image frame was 76.8 milliseconds, including preprocessing and displaying times before and after execution of the deep-learning algorithm. Implementation in a real-time system resulted in a processing rate of 30 frames per second with Nvidia Titan X GPUs.

- The authors conclude that this automatic polyp-detection system based on deep learning has high overall performance in both colonoscopy images and real-time videos.

“Wision AI is committed to realizing the clinical value of AI and mathematical medicine in a variety of indications, including gastroenterology, ophthalmology, neurology, and radiation-based imaging,” said JingJia Liu, CEO at Wision AI. “The results of this study demonstrate the power of our rigorous approach to developing deep-learning algorithms, which utilizes distinct datasets for training and validation and results in high levels of specificity and sensitivity that have the potential to improve diagnostic screening methods that are known to reduce disease risk, improve health outcomes and save lives.”

The first clinical trial of this technology had been completed early this year, and results from this study demonstrated a significantly improved adenoma detection rate (ADR) in the AI-aided group. Full results from this first clinical trial were presented at the United European Gastroenterology Week 2018 in Vienna last week by the authors of the Nature Biomedical Engineering publication, and Pu Wang, MD, a gastroenterologist at Sichuan Provincial Hospital, received the National Scholar Award for this cutting-edge research.