Stephanie Van Ness, Sr. Marketing Communications Manager, Integrated Computer Solutions12.02.19

Thirty years ago, gamers cheered with the development of commercially available glove-based computer-control interfaces inspired by sign language. Launched in 1989, the Power Glove could control the Nintendo game console. Though the hand-gesture technology could only support simple commands, it was a marvel in its day.

Still, the broader use of this technology was limited outside research laboratories.

Today, the advent of AI and the IoT is changing that. More accurate accelerometers, sensors, infrared cameras, and related tech has led to the development of myriad air-gesture recognition devices. These days, gesture-recognition tech is big business.

According to Research and Markets, the Gesture Recognition Market is expected to register a compound annual growth rate of more than 27.9 percent between 2019 and 2024. The technology can be used to control complex robotic systems, smart appliances, medical devices, and mixed-reality environments, among other systems.

Natural User Interfaces

So what exactly is gesture-recognition technology? Like voice, gesture-recognition technology is an example of a Natural User Interface (NUI).

“NUIs allow users to quickly learn how to control a device,” said Dorothy Shamonsky, Ph.D., chief UX strategy officer for Boston UX and UX design instructor at Brandeis University. “NUIs are designed to feel as natural as possible to the user. The NUI achieves this by creating a seamless interaction between human and machine.”

“If the interface itself seems to disappear, that’s a highly usable NUI,” she said. “This type of interface is well-suited to a host of applications, from autonomous vehicles to medical devices.” (Click here to read more about NUIs.)

This versatility explains why gesture tech is on the rise as the “next big thing” in interface control, even while voice (“Alexa, turn on the backyard lights”) remains popular. Companies from Microsoft to Intel have or are actively exploring gesture-recognition technology. Use cases extend to practically every corner of life. For instance, Chinese drone manufacturer DJI has developed autonomously flying drones designed for taking photos. They don’t require a remote control as hand gestures are used to guide the drones.

In the automotive industry, manufacturers are on a quest to find more-natural ways to control the infotainment system so the driver doesn’t have to take his or her eyes off the road. While voice offers many advantages for hands- and eye-free interaction, it has limitations—for instance, responding correctly in a noisy cabin. When paired with gesture, drivers’ needs can be met safely and conveniently. The popularity of hand-gesture control is on the rise in this industry, with companies like BMW incorporating a camera-based gesture control system in some models.

Gesture recognition is also reaching beyond the driveway into our homes. Piccolo Labs is building a smart camera that lets users give Alexa a well-earned break and instead use body (not just hand) movements to control their homes. The company’s technology uses motion tracking, pose estimation, 3D reconstruction, and deep learning to quickly identify people and gestures.

Medical Applications

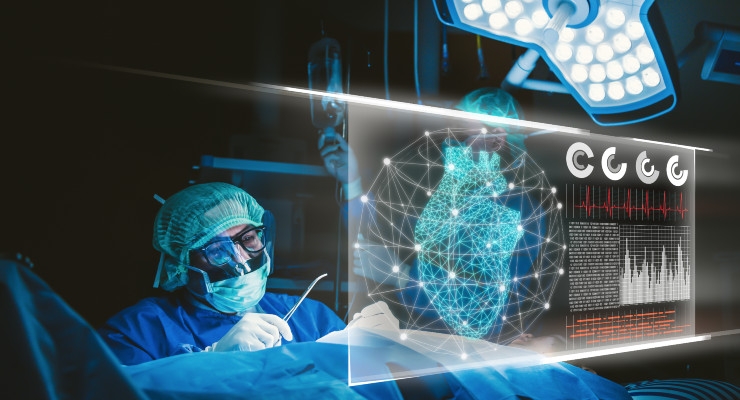

Nowhere is the use of gesture recognition more exciting than in the world of medicine where the tech has the potential to solve some vexing problems, from decreasing the potential for healthcare-associated infections (HAI) to modernizing the way surgeons interact with critical imaging equipment.

“There are situations where voice control is impractical or at least less-than optimal. The ER and OR, for instance, can be chaotic with a lot of different voices from doctors and nurses, and multiple beeping machines. In this environment, using a voice interface can be tricky, but where gesture control would be better suited and more beneficial,” explained Shamonsky.

In the operating room, surgeons are faced with competing demands—maintaining a strict boundary between what is and isn’t sterile, and accessing information and imaging pertinent to the patient and procedure. Surgeons can’t manipulate device touchscreens once scrubbed and gloved without breaking asepsis. And each scrub out costs $420 to $620.

That’s where gesture-controlled interfaces can be a huge benefit as they lend themselves to challenging environment—for instance, in situations when a nurse or doctor may not be able to touch or reach a screen but still needs to interact with a device. With gesture tech, doctors could access a patient’s MRI, even make a few notes by “writing” in the air. No need for a keyboard.

According to the World Health Organization’s report on the burden of endemic healthcare-associated infections worldwide (2011), in the United States, 99,000 deaths each year are attributed to HAIs at a cost of $6.5 billion. In Europe, 37,000 deaths annually are attributed to infections. Surgical infections comprise up to 20 percent of all HAIs.

Gesture recognition can reduce the potential for HAIs by offering a touchless interface for accessing patient information during procedures. In fact, this tech could replace traditional methods of viewing data: scrubbing out or finding a non-sterile circulating nurse to look up imaging.

According to device maker GestSure, the process of scrubbing out, consulting images using keyboard and mouse, and leaving the OR to scrub back in can take up to 10 minutes (an eternity when someone is under anesthesia) and cost as much as $62 per minute in OR time. To solve that problem, GestSure has developed a system that uses advanced sensors to allow doctors access to MRI, CT, and other imagery through simple hand gestures without breaking the sterile field.

Microsoft has also worked on a camera-based gesture-recognition system designed to allow a surgeon in a sterile environment to interact with a patient's imaging. Besides limiting the potential for contamination and infections, this type of system could speed procedures since no time—nor the surgeon’s focus—is lost breaking scrub.

Gesture NUIs have health-related uses outside the OR; Gesturetek’s IREX is one example. Intended for use in rehabilitation facilities, IREX is an exercise system that uses a gesture-control interface to help patients improve balance, flexion, rotation, and other physical movements.

Another health-related gesture-controlled device is Somatix SmokeBeat—a wearable, sensor-based system created to help people quit smoking. Other commercially available gesture-controlled devices can detect a sudden fall, determine whether a patient has consumed enough water or taken their medication, monitor sleep habits, and even detect neurological malfunctioning.

Gesture Control Isn’t Perfect

Gesture UIs do have drawbacks. For instance, they typically require more computing power than other types of interfaces because they need to filter out a lot of “noise”—false negatives due to random, gesture-like information in the data field (say, a nurse standing next to or behind a surgeon who is using a gesture interface to write patient notes in the air).

In addition, users take longer to acclimate to gesture-based interfaces than other types, including voice and mouse/keyboard, because precise movements must be mastered. And, using gesture tech is more physically demanding than these other methods. While most people can easily click a mouse, using hand and arm motions to control a gesture-based interface—like a concertmaster conducting a symphony orchestra—can lead to fatigue. And gesture control may be less inclusive for those with disabilities.

That means that despite its great promise, gesture control is not right in all circumstances and great thought must be given to the risks and rewards of every implementation.

The Takeaway

Though gesture-controlled interfaces won’t replace all other UIs in every situation, the technology’s potential is vast. By eliminating our dependency on keyboard and mouse (and offering usability in situations that challenge touch- and voice-controlled devices), these intuitive, touchless interfaces will fundamentally improve how we engage with many sectors of the built environment.

Head of content development, Stephanie Van Ness is Sr. marketing communications manager and chief storyteller at ICS & Boston UX. An experienced copywriter with a Boston University J-school degree, she writes about user experience (UX) design and innovations in technology, from self-driving vehicles to gesture-controlled medical devices. Her work has appeared in a number of industry publications.

Still, the broader use of this technology was limited outside research laboratories.

Today, the advent of AI and the IoT is changing that. More accurate accelerometers, sensors, infrared cameras, and related tech has led to the development of myriad air-gesture recognition devices. These days, gesture-recognition tech is big business.

According to Research and Markets, the Gesture Recognition Market is expected to register a compound annual growth rate of more than 27.9 percent between 2019 and 2024. The technology can be used to control complex robotic systems, smart appliances, medical devices, and mixed-reality environments, among other systems.

Natural User Interfaces

So what exactly is gesture-recognition technology? Like voice, gesture-recognition technology is an example of a Natural User Interface (NUI).

“NUIs allow users to quickly learn how to control a device,” said Dorothy Shamonsky, Ph.D., chief UX strategy officer for Boston UX and UX design instructor at Brandeis University. “NUIs are designed to feel as natural as possible to the user. The NUI achieves this by creating a seamless interaction between human and machine.”

“If the interface itself seems to disappear, that’s a highly usable NUI,” she said. “This type of interface is well-suited to a host of applications, from autonomous vehicles to medical devices.” (Click here to read more about NUIs.)

This versatility explains why gesture tech is on the rise as the “next big thing” in interface control, even while voice (“Alexa, turn on the backyard lights”) remains popular. Companies from Microsoft to Intel have or are actively exploring gesture-recognition technology. Use cases extend to practically every corner of life. For instance, Chinese drone manufacturer DJI has developed autonomously flying drones designed for taking photos. They don’t require a remote control as hand gestures are used to guide the drones.

In the automotive industry, manufacturers are on a quest to find more-natural ways to control the infotainment system so the driver doesn’t have to take his or her eyes off the road. While voice offers many advantages for hands- and eye-free interaction, it has limitations—for instance, responding correctly in a noisy cabin. When paired with gesture, drivers’ needs can be met safely and conveniently. The popularity of hand-gesture control is on the rise in this industry, with companies like BMW incorporating a camera-based gesture control system in some models.

Gesture recognition is also reaching beyond the driveway into our homes. Piccolo Labs is building a smart camera that lets users give Alexa a well-earned break and instead use body (not just hand) movements to control their homes. The company’s technology uses motion tracking, pose estimation, 3D reconstruction, and deep learning to quickly identify people and gestures.

Medical Applications

Nowhere is the use of gesture recognition more exciting than in the world of medicine where the tech has the potential to solve some vexing problems, from decreasing the potential for healthcare-associated infections (HAI) to modernizing the way surgeons interact with critical imaging equipment.

“There are situations where voice control is impractical or at least less-than optimal. The ER and OR, for instance, can be chaotic with a lot of different voices from doctors and nurses, and multiple beeping machines. In this environment, using a voice interface can be tricky, but where gesture control would be better suited and more beneficial,” explained Shamonsky.

In the operating room, surgeons are faced with competing demands—maintaining a strict boundary between what is and isn’t sterile, and accessing information and imaging pertinent to the patient and procedure. Surgeons can’t manipulate device touchscreens once scrubbed and gloved without breaking asepsis. And each scrub out costs $420 to $620.

That’s where gesture-controlled interfaces can be a huge benefit as they lend themselves to challenging environment—for instance, in situations when a nurse or doctor may not be able to touch or reach a screen but still needs to interact with a device. With gesture tech, doctors could access a patient’s MRI, even make a few notes by “writing” in the air. No need for a keyboard.

According to the World Health Organization’s report on the burden of endemic healthcare-associated infections worldwide (2011), in the United States, 99,000 deaths each year are attributed to HAIs at a cost of $6.5 billion. In Europe, 37,000 deaths annually are attributed to infections. Surgical infections comprise up to 20 percent of all HAIs.

Gesture recognition can reduce the potential for HAIs by offering a touchless interface for accessing patient information during procedures. In fact, this tech could replace traditional methods of viewing data: scrubbing out or finding a non-sterile circulating nurse to look up imaging.

According to device maker GestSure, the process of scrubbing out, consulting images using keyboard and mouse, and leaving the OR to scrub back in can take up to 10 minutes (an eternity when someone is under anesthesia) and cost as much as $62 per minute in OR time. To solve that problem, GestSure has developed a system that uses advanced sensors to allow doctors access to MRI, CT, and other imagery through simple hand gestures without breaking the sterile field.

Microsoft has also worked on a camera-based gesture-recognition system designed to allow a surgeon in a sterile environment to interact with a patient's imaging. Besides limiting the potential for contamination and infections, this type of system could speed procedures since no time—nor the surgeon’s focus—is lost breaking scrub.

Gesture NUIs have health-related uses outside the OR; Gesturetek’s IREX is one example. Intended for use in rehabilitation facilities, IREX is an exercise system that uses a gesture-control interface to help patients improve balance, flexion, rotation, and other physical movements.

Another health-related gesture-controlled device is Somatix SmokeBeat—a wearable, sensor-based system created to help people quit smoking. Other commercially available gesture-controlled devices can detect a sudden fall, determine whether a patient has consumed enough water or taken their medication, monitor sleep habits, and even detect neurological malfunctioning.

Gesture Control Isn’t Perfect

Gesture UIs do have drawbacks. For instance, they typically require more computing power than other types of interfaces because they need to filter out a lot of “noise”—false negatives due to random, gesture-like information in the data field (say, a nurse standing next to or behind a surgeon who is using a gesture interface to write patient notes in the air).

In addition, users take longer to acclimate to gesture-based interfaces than other types, including voice and mouse/keyboard, because precise movements must be mastered. And, using gesture tech is more physically demanding than these other methods. While most people can easily click a mouse, using hand and arm motions to control a gesture-based interface—like a concertmaster conducting a symphony orchestra—can lead to fatigue. And gesture control may be less inclusive for those with disabilities.

That means that despite its great promise, gesture control is not right in all circumstances and great thought must be given to the risks and rewards of every implementation.

The Takeaway

Though gesture-controlled interfaces won’t replace all other UIs in every situation, the technology’s potential is vast. By eliminating our dependency on keyboard and mouse (and offering usability in situations that challenge touch- and voice-controlled devices), these intuitive, touchless interfaces will fundamentally improve how we engage with many sectors of the built environment.

Head of content development, Stephanie Van Ness is Sr. marketing communications manager and chief storyteller at ICS & Boston UX. An experienced copywriter with a Boston University J-school degree, she writes about user experience (UX) design and innovations in technology, from self-driving vehicles to gesture-controlled medical devices. Her work has appeared in a number of industry publications.